What Is HDR?

Dynamic Range is a term engineers and physicists use to define the full range of values that a piece of technology can deal with. As examples: in film, the range between the lowest amount of light that a piece of film can capture (if you go any darker, you can’t detect the difference) to the largest amount of light that the film can tolerate before it saturates (go any brighter, and you can’t detect the difference – the film has absorbed as much light as it can) determine the boundaries of the brightness dynamic range. In audio, the range from silence to the loudest level that a piece of equipment can record, tolerate or present (play back) represent the loudness dynamic range.

It’s important to note that different pieces of equipment at different stages in the media production/delivery process could, in theory, have different supported dynamic ranges. In that case, the true dynamic range of the system may be limited to the lowest dynamic range component.

If the aim is for a system to reproduce, as accurately as possible, the experience an observer would have if they were actually observing the original event, then that system should have a dynamic range that is equal to – or preferably slightly greater than – the dynamic range that the observer would encounter where they actually at the point of the event.

Unmatched measurement and monitoring performance for content creation and content distribution.

Unmatched measurement and monitoring performance for content creation and content distribution. HDR vs SDR

To summarize the differences between HDR and standard dynamic range (SDR):

- HDR video offers a greater range of darks and lights (much higher max brightness)

- In addition to a higher range of supported brightness levels, A larger breadth of colors is available to choose from, especially in the green range (Please refer to the color gamut graph below). This is referred to as the color gamut.

- Finally, clever use of mathematical transfer functions allow the stored brightness values within a digital video signal to have more granularity within the darker ranges of brightness which takes advantage of the natural ability of the human eye to detect subtleties within dark/grey lighting as opposed to very bright lighting.

Limitations to HDR

However, reality (and financial requirements) often mean that we have to limit the amount of information that we can pass through a system. In an ideal TV, the upper limit for the dynamic range of the video would match the dynamic range of the human visual system. To date, we’ve fallen far short of that.

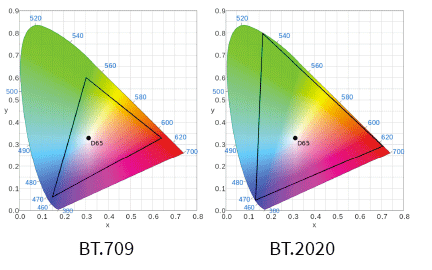

One thing to remember, though – when people talk about HDR, they’re almost always referring to High Dynamic Range and the ability to reproduce more colors. This aspect of video engineering is more correctly called Wide Color Gamut (WCG) – you really can’t do one without the other. You can see how the triangles overlaid on top of the visual color spectrum graphs to the left show different areas of coverage. The BT.709 triangle is the standard used in HD content for many years. The BT.2020 triangle shows the much wider coverage of the green in addition to extending the red and blue corners out the edge of the visible spectrum.

A system that truly supports HDR will need to support both the higher dynamic range of brightness levels AND the wider color gamut to properly reproduce the image for the viewer.

4K, 8K, HDR or UHD?

A common point of confusion among consumers is the difference between HDR and frame size. HDR represents how the image and color is stored within the digital video stream for reproduction on a display. UHD, 4K, and 8K (for example) represent the frame size of the image. While it is much more common for HDR images to accompany larger frame sizes, they are not directly related and it is entirely possible to have HDR imagery in standard HD content.

Many UHD, 4K, and 8K televisions are on the market today and not all of them support HDR video. For the best possible viewing experience, be sure to use a display that can support both the required image dimensions and the color reproduction enabled by an HDR video signal.

Truth and Hype on HDR

There’s an aspect we need to consider with HDR - and that’s the sensitivity of the eye to variations in brightness. The human eye can resolve a wide range of colors and brightness found in the natural world, but our traditional TV systems can only reproduce a small portion, typically between the range of 0.117 nits for full black (a nit is a measurement of brightness) to 100 nits for full white. More modern television sets in consumer homes can produce a maximum brightness that is typically between 250-500 nits.

In comparison, the human eye can perceive brightness levels in excess of 10,000 nits. So Cinematographers and Directors of Photography have historically needed to adjust aperture on their cameras to allow those bright colors to still fit within the available range of the camera sensor, storage format, and TV transmission systems. The same is true for the range of colors that can be reproduced (“color gamut”), which is again limited to what is referred to as “rec 709 colors”. This results in what is now termed “Standard Dynamic Range” (or SDR) images. It’s not perfect, but it’s the best we’ve had so far (and it was largely determined by the available CRT technology at the time the specs were written).

Technology advances, and we now have display technologies that can produce a significantly wider black-to-white range, along with being able to reproduce a much wider set of colors. The result of both of these is displays that are capable of producing images which are much more vivid and true-to-life (assuming they were shot with this display technology in mind, of course) – this is what is now referred to as “High Dynamic Range” (HDR) images.

The displays themselves are only part of the story, however. Cinematographers/DPs must now set up their cameras to capture a much wider dynamic range, and for the transmission and processing (not too much of a problem there!). The colorists need to work their magic in HDR color space (not too much of a problem there, either). But the processing equipment needs to be able to work on signals of 12 bits or larger, in order to process these images. Generally speaking, this means that these processing devices must have an internal video pipeline of 16 bits. If not, the resultant processing will “crush” the dynamic range of the image, which goes against the whole point of HDR – in the worst case, they may throw away bits, which will result in significant contouring.

But there’s a bigger problem than that: whilst you can already find TV sets which are labeled as being HDR capable, there are no standards as yet for the format to be used for delivery of HDR material – in fact, the CEA has only just announced the industry definition for HDR compatible displays themselves. We have to consider legacy support as part of the process– how should an SDR set display an HDR signal? There are several approaches, some of which separate the signal into SDR images with a sidecar transmission that provide the additional information needed to recreate the HDR signal in an HDR display. Others use metadata to tell the SDR set what to do with an HDR signal. HDR is unlikely to achieve widespread adoption until this standardization issue is resolved.

One thing that is certain, though, is that HDR is very much at the forefront of everybody’s mind when considering new television technologies. You only have to see the images produced by a properly sourced HDR display to understand the impact this technology is going to have on TV viewing. In fact, a well set-up 1080p HDR image will blow away an SDR 4K image in almost every respect – we just need to standardize on the delivery format and characteristics so manufacturers know what to design to.

At Telestream, we ensure our customers have access to all the latest technologies. Vantage was engineered with a 16 bit (award-winning) video processing system and pipeline, so it is perfectly poised to process HDR material.

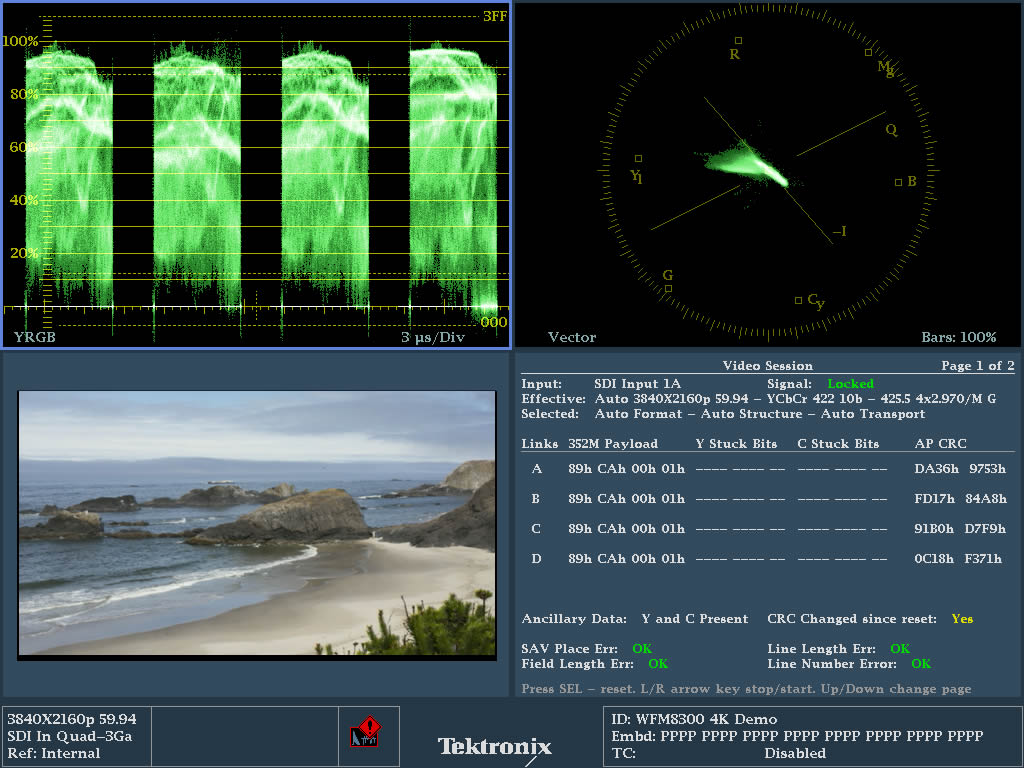

HDR Analysis with PRISM Waveform Monitors

Camera operators face challenges transitioning to 4K and HDR, as HDR production requires more attention during acquisition than SDR production. Whether you’re filming live UHD content like a football game, or episodic UHD content like a TV series, you’ll find familiar measurement tools and advanced analytics with Telestream. Telestream’s patented Stop Display our advanced HDR Waveform Monitor analysis instantly checks the dynamic range of shooting, regardless of camera type—whether SDR, HDR, or both—simplifying the process of monitoring a wide variety of different camera gammas and HDR specifications like ST2084 PQ and HLG.

Vantage Does That – HDR Media Processing

When working with HDR, what operations in media processing will likely be required?

- Up-convert old libraries of content to HDR.

- Metadata normalization for content from HDR capable cameras. Cameras have supported more stops of dynamic range than televisions can reproduce for many years. Ingesting this content and normalizing it into editing formats requires that this metadata be as un-molested as possible to retain original image quality and survive the editing process.

- Format conversion. In rare cases, some users may need to cross-convert from one HDR format to another as part of the content normalization for editing.

- Mastering/Editing. The ability to do color grading and convert between color spaces with the best possible reproduction of the original content is extremely valuable at this stage. Creating LUT files for specific conversions to create the desired “look” happen at this stage, and Vantage is able to use these LUT files during conversions.

- Preparation of content for distribution. Final encoding into consumer friendly formats for devices, live streaming, or package creation such as IMF. These all require the ability to either retain, up-convert, or down-convert content into the desired distribution color space.

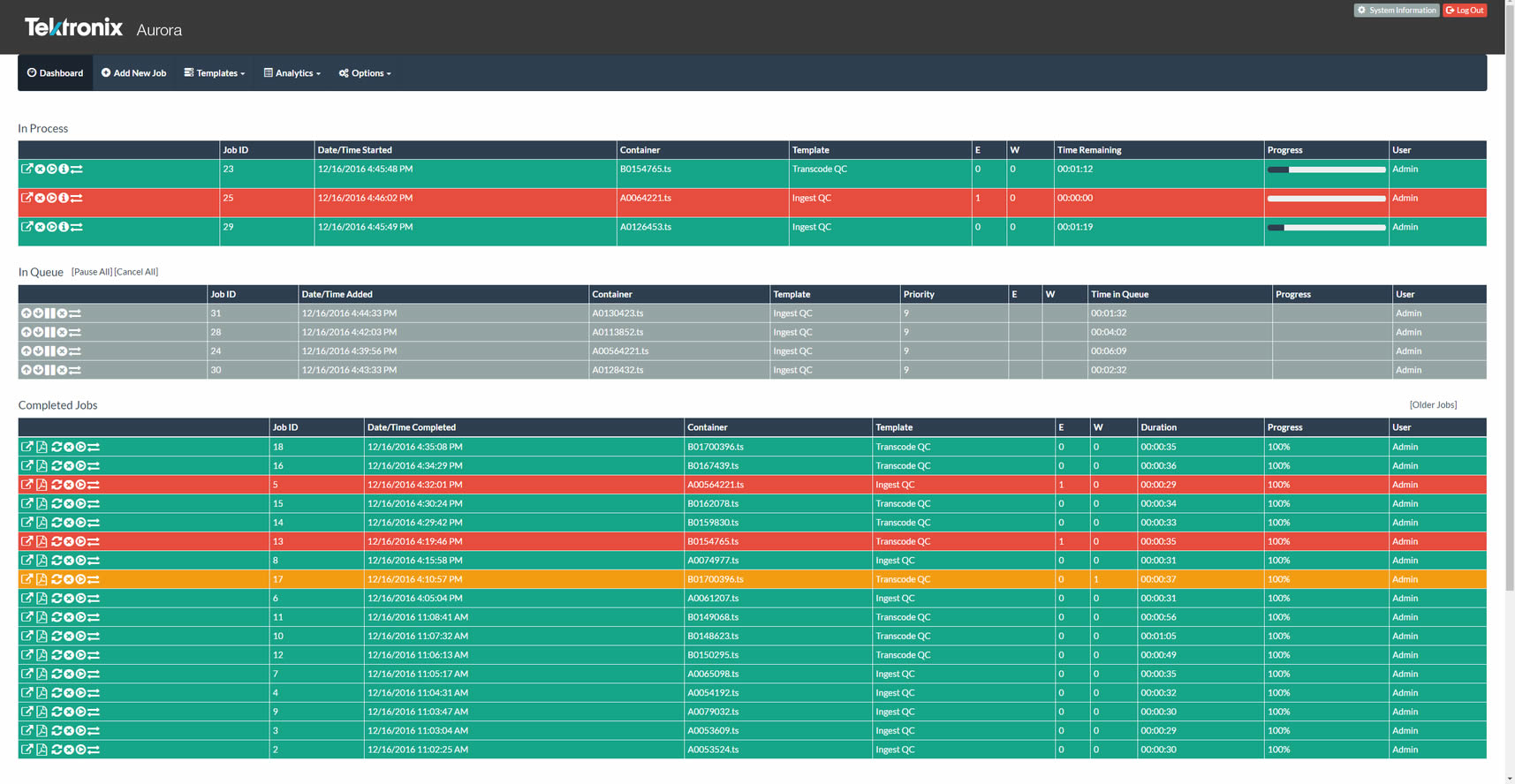

All of the above can be accomplished with the Vantage media processing platform with workflow automation. Vantage is engineered with an award-winning 16-bit video processing system and pipeline, so its users are effectively HDR ready. And for certain input/output configurations, they can already process HDR material today.

HDR Monitoring & QC

Video and Audio test solutions for Analysis, Quality Control, Service Assurance and Regulatory Compliance; supporting a wide range of applications from HD to 4K/UHD, SDI to IP, and Linear Multicast to OTT ABR networks. Ensure that HDR metadata is present and correctly signaled however your content is stored or distributed.

Vantage

Fully scalable workflow automation and media processing platform able to ingest, identify, process, and retain HDR content throughout your workflows. Convert between color spaces, insert missing metadata, and ensure the best possible quality with the Vantage 16-bit video processing pipeline with full support for HDR standards both on premise and in the cloud!

Lightspeed Live Capture

Multi-channel on-premise capture solution for HDR and SDR content ingested through IP or SDI. Encode to enterprise-class codecs and containers with full support for HDR color space signaling and compression.